A while back, I posted about some testing we were doing of various software load balancers for WordPress.com. We chose to use Pound and have been using it past 2-ish years. We started to run into some issues, however, so we starting looking elsewhere. Some of these problems were:

- Lack of true configuration reload support made managing our 20+ load balancers cumbersome. We had a solution (hack) in place, but it was getting to be a pain.

- When something would break on the backend and cause 20-50k connections to pile up, the thread creation would cause huge load spikes and sometimes render the servers useless.

- As we started to push 700-1000 requests per second per load balancer, it seemed things started to slow down. Hard to get quantitative data on this because page load times are dependent on so many things.

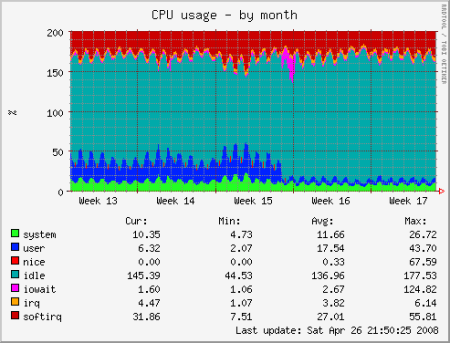

So… A couple weeks ago we finished converting all our load balancers to Nginx. We have been using Nginx for Gravatar for a few months and have been impressed by its performance, so moving WordPress.com over was the obvious next step. Here is a graph that shows CPU usage before and after the switch. Pretty impressive!

Before choosing nginx, we looked at HAProxy, Perlbal, and LVS. Here are some of the reasons we chose Nginx:

- Easy and flexible configuration (true config “reload” support has made my life easier)

- Can also be used as a web server, which allows us to simplify our software stack (we are not using nginx as a web server currently, but may switch at some point).

- Only software we tested which could handle 8000 (live traffic, not benchmark) requests/second on a single server