Since WordPress.com broke 10 million pageviews today, I thought it would be a good time to talk a little bit about keeping track of all the servers that run WordPress.com, Akismet, WordPress.org, Ping-o-matic, etc. Currently we have over 300 servers online in 5 different data centers across the country. Some of these are collocated, and others are with dedicated hosting providers, but the bottom line is that we need to keep track of them all as if they were our children! Currently we use Nagios for server health monitoring, Munin for graphing various server metrics, and a wiki to keep track of all the server hardware specs, IPs, vendor IDs, etc. All of these tools have suited us well up until now, but there have been some scaling issues.

- MediaWiki — Like Wikipedia, we have a MediaWiki page with a table that contains all of our server information, from hardware configuration to physical location, price, and IP information. Unfortunately, MediaWiki tables don’t seem to be very flexible and you cannot perform row or column-based operations. This makes simple things such as counting how many servers we have become somewhat time consuming. Also, when you get to 300 rows, editing the table becomes a very tedious task. It is very easy to make a mistake throwing the entire table out of whack. Even dividing the data into a few tables doesn’t make it much easier. In addition, there is no concept of unique records (nor do I really think there should be) so it is very easy to end up with 2 servers that have the same IP listed or the same hostname.

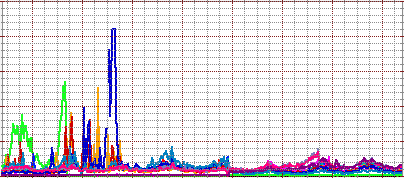

- Munin — Munin has become an invaluable tool for us when troubleshooting issues and planning future server expansion. Unfortunately, scaling munin hasn’t been the best experience. At about 100 hosts, we started running into disk IO problems caused by the various data collection, graphing and HTML output jobs munin runs. It seemed the solution was to switch to the JIT graphing model which only drew the graphs when you viewed them. Unfortunately, this only seemed to make the interface excruciatingly slow and didn’t help the IO problems we were having. At about 150 hosts we moved munin to a dedicated server with 15k RPM SCSI drives in a RAID 0 array in an attempt to give it some more breathing room. That worked for a while, but we then started running into problems where the process of polling all the hosts actually took longer than the monitoring interval. The result was that we were missing some data. Since then, we have resorted to removing some of the things we graph on each server in order to lighten the load. Every once in a while, we still run into problems where a server is a little slow to respond and it causes the polling to take longer than 5 minutes. Obviously, better hardware and reducing graphed items isn’t a scalable solution so something is going to have to change. We could put a munin monitoring server in each datacenter, but we currently sum and stack graphs across datacenters. I am not sure if/how that works when the data is on different servers. The other big problem I see with munin is that if one host’s graphs stop updating and that host was part of a totals graph, the totals graph will just stop working. This happened today — very frustrating.

- Nagios — I feel this has scaled the best of the 3. We have this running on a relatively light server and have no load or scheduling issues. I think it is time, however, to look at moving to Nagios’ distributed monitoring model. The main reason for this is that since we have multiple datacenters, each of which have their own private network, it is important for us to monitor each of these networks independently in addition to the public internet connectivity to each datacenter. The simplest way to do this is to put a nagios monitoring node in each data center which can then monitor all the servers in that facility and report the results back to the central monitoring server. Splitting up the workload should also allow us to scale to thousands of hosts without any problems.

Anyone have recommendations on how to better deal with these basic server monitoring needs? I have looked at Zabbix, Cacti, Ganglia, and some others in the past, but have never been super-impressed. Barring any major revelations in the next couple weeks, I think we are going to continue to scale out Nagios and Munin and replace the wiki page with a simple PHP/MySQL application that is flexible enough to integrate into our configuration management and deploy tools.