As you may have heard, on March 3rd and into the 4th, 2011, WordPress.com was targeted by a rather large Distributed Denial of Service Attack. I am part of the systems and infrastructure team at Automattic and it is our team’s responsibility to a) mitigate the attack, b) communicate status updates and details of the attack, and c) figure out how to better protect ourselves in the future. We are still working on the third part, but I wanted to share some details here.

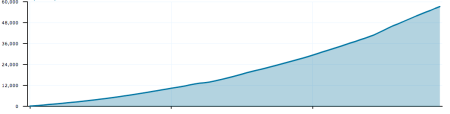

One of our hosting partners, Peer1, provided us these InMon graphs to help illustrate the timeline. What we saw was not one single attack, but 6 separate attacks beginning at 2:10AM PST on March 3rd. All of these attacks were directed at a single site hosted on WordPress.com’s servers. The first graph shows the size of the attack in bits per second (bandwidth), and the second graph shows packets per second. The different colors represent source IP ranges.

The first 5 attacks caused minimal disruption to our infrastructure because they were smaller in size and shorter in duration. The largest attack began at 9:20AM PST and was mostly blocked by 10:20AM PST. The attacks were TCP floods directed at port 80 of our load balancers. These types of attacks try to fill the network links and overwhelm network routers, switches, and servers with “junk” packets which prevents legitimate requests from getting through.

The last TCP flood (the largest one on the graph) saturated the links of some of our providers and overwhelmed the core network routers in one of our data centers. In order to block the attack effectively, we had to work directly with our hosting partners and their Tier 1 bandwidth providers to filter the attacks upstream. This process took an hour or two.

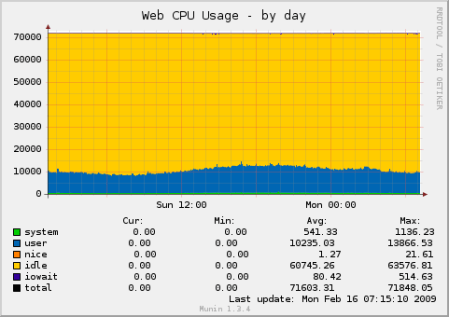

Once the last attack was mitigated at around 10:20AM PST, we saw a lull in activity. On March 4th around 3AM PST, the attackers switched tactics. Rather than a TCP flood, they switched to a HTTP resource consumption attack. Enlisting a bot-net consisting of thousands of compromised PCs, they made many thousands of simultaneous HTTP requests in an attempt to overwhelm our servers. The source IPs were completely different than the previous attacks, but mostly still from China. Fortunately for us, the WordPress.com grid harnesses over 3,600 CPU cores in our web tier alone, so we were able to quickly mitigate this attack and identify the target.

We see denial of service attacks every day on WordPress.com and 99.9% of them have no user impact. This type of attack made it difficult to initially determine the target since the incoming DDoS traffic did not have any identifying information contained in the packets. WordPress.com hosts over 18 million sites, so finding the needle in the haystack is a challenge. This attack was large, in the 4-6Gbit range, but not the largest we have seen. For example, in 2008, we experienced a DDoS in the 8Gbit/sec range.

While it is true that some attacks are politically motivated, contrary to our initial suspicions, we have no reason to believe this one was. We are big proponents of free speech and aim to provide a platform that supports that freedom. We even have dedicated infrastructure for sites under active attack. Some of these attacks last for months, but this allows us to keep these sites online and not put our other users at risk.

We also don’t put all of our eggs in one basket. WordPress.com alone has 24 load balancers in 3 different data centers that serve production traffic. These load balancers are deployed across different network segments and different IP ranges. As a result, some sites were only affected for a couple minutes (when our provider’s core network infrastructure failed) throughout the duration of these attacks. We are working on ways to improve this segmentation even more.

If you have any questions, feel free to leave them in the comments and I will try to answer them.