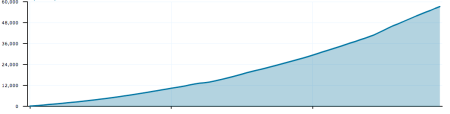

We are in the process of deploying some new infrastructure to store the 150+GB of new content (media only, not including text) uploaded to WordPress.com daily.

After some searching and testing, we have decided to use the open source software MogileFS developed in part by our friends at Six Apart. Our initial deployment is going to be 180TB of storage in a single data center and we plan to expand this to include multiple data centers in early 2010. In order to get that amount of storage affordably, the options are limited. We thought about building Backblaze devices, but decided that the ongoing management of these in our hosting environment would be prohibitively complicated. We eventually settled on Dell’s MD PowerVault series. Our configuration consists of:

- 4 x Dell R710 ( 32GB RAM/2 x Intel E5540/2 x 146GB SAS RAID 1)

- 4 x Dell MD3000 (15 x 1TB 7200 RPM HDD each)

- 8 x Dell MD1000 (15 x 1TB 7200 RPM HDD each)

Each Dell R710 is connected to a MD3000 and then 2 MD1000s are connected to each MD3000. The end result is 4 self-contained units, each providing 45TB of storage for a total of 180TB.

Our proof of concept was deployed on a single Dell 2950 connected to a MD1000 and things worked relatively flawlessly. We could use all of our existing tools to monitor, manage, and configure the devices when needed. Little did I know the MD3000s were so much of a pain 🙂 Since we are using MogileFS which handles the distribution of files across various hosts and devices, we wanted these devices setup in what I thought was a relatively simple JBOD configuration. Each drive would be exported as a device to the OS, then we would mount 45 devices per machine and MogileFS would take care of the rest. Didn’t exactly work that way.

When the hardware was initially deployed to us, they were configured in a high availability (HA) setup, with each controller on the MD3000 connected to a controller on the R710. This way, if a controller fails, in theory the storage is still accessible. The problem with this type of setup is that in order to make it work flawlessly, you need to use the Dell multi-path proxy and mpt drivers, not the ones provided by the Linux kernel. Dell’s provided stuff doesn’t work on Debian. Initially, without multipath configured, some confusing stuff happens — we had 90 devices detected by the OS (/dev/sdb through /dev/sdcn), but every other device was un-reachable. After some trial and error with various multipath configurations, and some help I ended up with this:

apt-get install multipath-tools

Our multipath.conf:

defaults {

getuid_callout "/lib/udev/scsi_id -g -u -s /block/%n"

user_friendly_names on

}

devices {

device {

vendor DELL*

product MD3000*

path_grouping_policy failover

getuid_callout "/lib/udev/scsi_id -g -u --device=/dev/%n"

features "1 queue_if_no_path"

path_checker rdac

prio_callout "/sbin/mpath_prio_rdac /dev/%n"

hardware_handler "1 rdac"

failback immediate

}

}

blacklist {

device {

vendor DELL.*

product Universal.*

}

device {

vendor DELL.*

product Virtual.*

}

}

multipath -F

multipath -v2

/etc/init.d/multipath-tools start

This gave me a bunch of device names in /dev/mapper/* which I could access, partition, format, and mount. A few things to note:

- user_friendly_names doesn’t seem to work. The devices were all still labeled by their WWID even with that option enabled

- The status of the paths as shown by multipath -ll seemed to change over time (from active to ghost). Not sure why.

- Even with all of this set up and working, I still was seeing the occasional I/O error and path failure reported in the logs

After a few hours of “fun” with this, I decided that it wasn’t worth the hassle or complexity and since we have redundant storage devices anyway, we would just configure the devices in “single path” mode and mount them directly and forego multipath. Not so fast…according to Dell engineers, “single path mode” is not supported. Easy enough, lets un-plug one of the controllers, creating our own “single path mode” and everything should work, right? Sort of.

If you just go and unplug the controller while everything is running, nothing works. The OS needs to re-scan the devices in order to address them properly. The easiest way for this to happen is to reboot (sure this isn’t Windows?). After a reboot, the OS properly saw 45 devices (/dev/sdb – /dev/sdau) which is what I would have expected. The only problem was that every other device was inaccessible! It turns out, that the MD3000 tries to balance the devices across the 2 controllers, and 1/2 of the drives had been assigned a preferred path of controller 1 which was unplugged. After some additional MD3000 configuration, we were able to force all of the devices to prefer controller 0 and everything was accessible once again.

Only other thing worth noting here is that the MD3000 exports an addition device that you may not recognize:

scsi 1:0:0:31: Direct-Access DELL Universal Xport 0735 PQ: 0 ANSI: 5

For us this was LUN 31 and the number doesn’t seem user configurable, but I suppose other hardware may assign a different LUN. This is a management device for the MD3000 and not a device that you can or should partition, format, or mount. We just made sure to skip it in our setup scripts.

I suppose if we were running Red Hat Enterprise Linux, CentOS, SUSE, or Windows, this would have all worked a bit more smoothly, but I don’t want to run any of those. We have over 1000 Debian servers deployed and I have no plans on switching just because of Dell. I really wish Dell would make their stuff less distro-specific — it would make things easier for everyone.

Is anyone else successfully running this type of hardware configuration on Debian using multipath? Have you tested a failure? Do you have random I/O errors in your logs? Would love to hear stories and tips.

I have some more posts to write about our adventures in Dell MD land. The next one will be about getting Dell’s SMcli working on Debian, and then after that a post with some details of our MogileFS implementation.

* Thanks to the fine folks at Layered Tech for helping us tweak the MD3000 configuration throughout this process.

Leave a comment